New Tools in iOS 11 for Developers

2024-04-18 11:56:27 • Filed to: iOS PDF Apps • Proven solutions

Every time Apple introduces a new operating system, the company offers new tools for developers, and new apps for users. The company just released iOS 11 for developers, and the new version brings a lot of new tools. With an improved HomeKit, addition of ArKit for developers, and Core ML, Apple gives developers tools to make reality apps, smarter apps, and much more. Now, let’s take a look at all the new tools for developers, and what you can do with them.

If you also need an excellent PDF editor for your iPhone to edit, convert, annotate, sign PDF, free download Wondershare PDFelement to have a try.

What's the New Tools for Developers

1. ARKit

The latest platform Apple brought to the iOS allows developers to easily build augmented reality experience into their application. Developers can now create useful measuring apps using the camera, processors, and motion sensors of your Apple device, be it iPhone or iPad.

For example, one app that developers already made and showed allows users to get the distance between locations. The app requires users to tap two locations, and then shows the total distance between the locations. But that is just one example.

Gaming apps will also benefit from the iOS 11 ARKit, with some developers already building Minecraft AR app. This app, for example, lets you block around their real-world environment.

2. HomeKit

HomeKit has been part of the Apple arsenal for some time now. But with every new iOS, Apple updates the HomeKit, and that is the case for the iOS 11 as well. Here are some changes.

New product types, including sprinklers and faucets. With those two, HomeKit now supports 16 product types including sensors, window shades, garage doors, humidifiers, air conditioners, fans, cameras, thermostats, and much more. We still have to wait for a major appliance like washing machines and refrigerators. But we are getting there. Faucets and sprinkles will allow integration to products that initiate water flow.

Setting up HomeKit accessories is easier, thanks to the pairing with QR codes and NFC. All QR codes will be placed on packaging for accessories, so that users can scan them within the Home app.

3. Core ML

One thing was clear during the WWDC 2017. Apple wants to go all in on machine learning. The Cupertino company is trying to make it as easy for developers to join them. Last year Apple introduced Metal CNN and BNNS frameworks for basic convolutional networks. This year, Apple went a step further and introduced iOS 11 Core ML, a toolkit that makes easy for developers to put ML models into their app.

Core ML got the most attention during WWDC, and it is understandable. Core ML is arguably the best framework developers will use for their apps. The framework allows developers to build more intelligent apps with machine learning. The new foundational framework can be used across all Apple products including Camera, Siri, and QuickType. With just a few more lines of code, you can build apps with lots of new features.

Core ML supports extensive deep learning with more than 30 layer types, but also standard models such as SVMs and tree ensembles. Built on low level technologies, Core ML takes advantage of your CPU and GPU to bring maximum efficiency.

4. Depth Map API

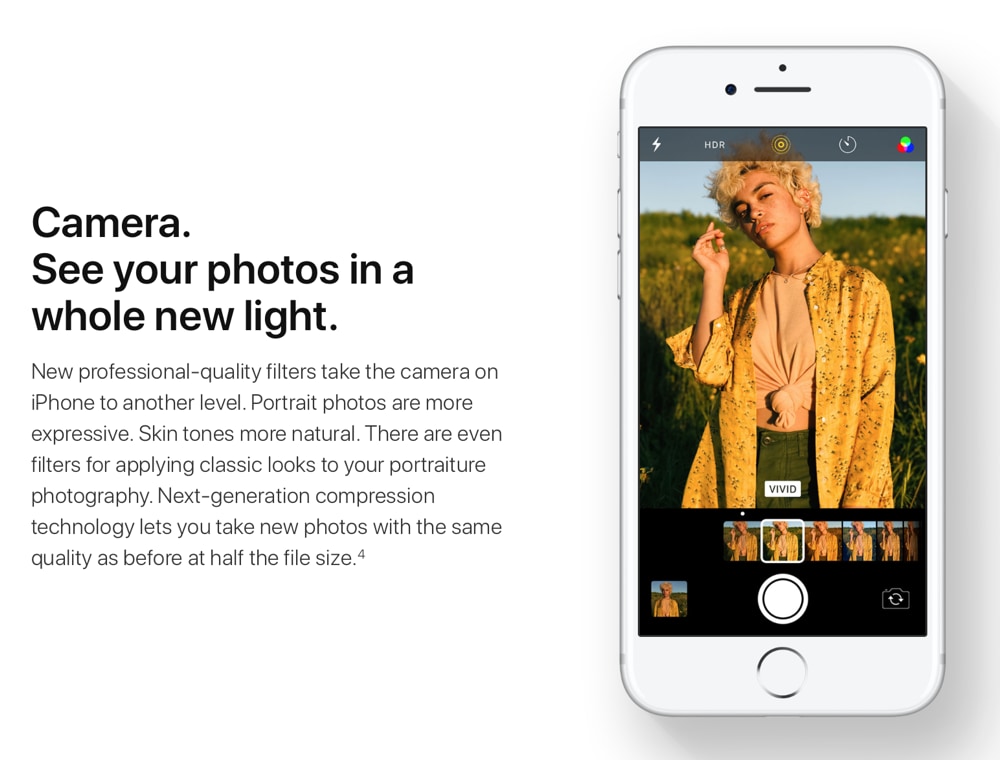

The new Depth Map API is basically a new feature for the camera. The camera has been updated in general with video and image compression. As for iOS 11 map API, this feature for Portrait Mode allows developers to create new and custom depth filters.

Free Download or Buy PDFelement right now!

Free Download or Buy PDFelement right now!

Buy PDFelement right now!

Buy PDFelement right now!

Elise Williams

chief Editor